23.02 2026 · 15 min read

HarborGate.ai: From Enterprise Governance to the Operating System for a New Kind of Company

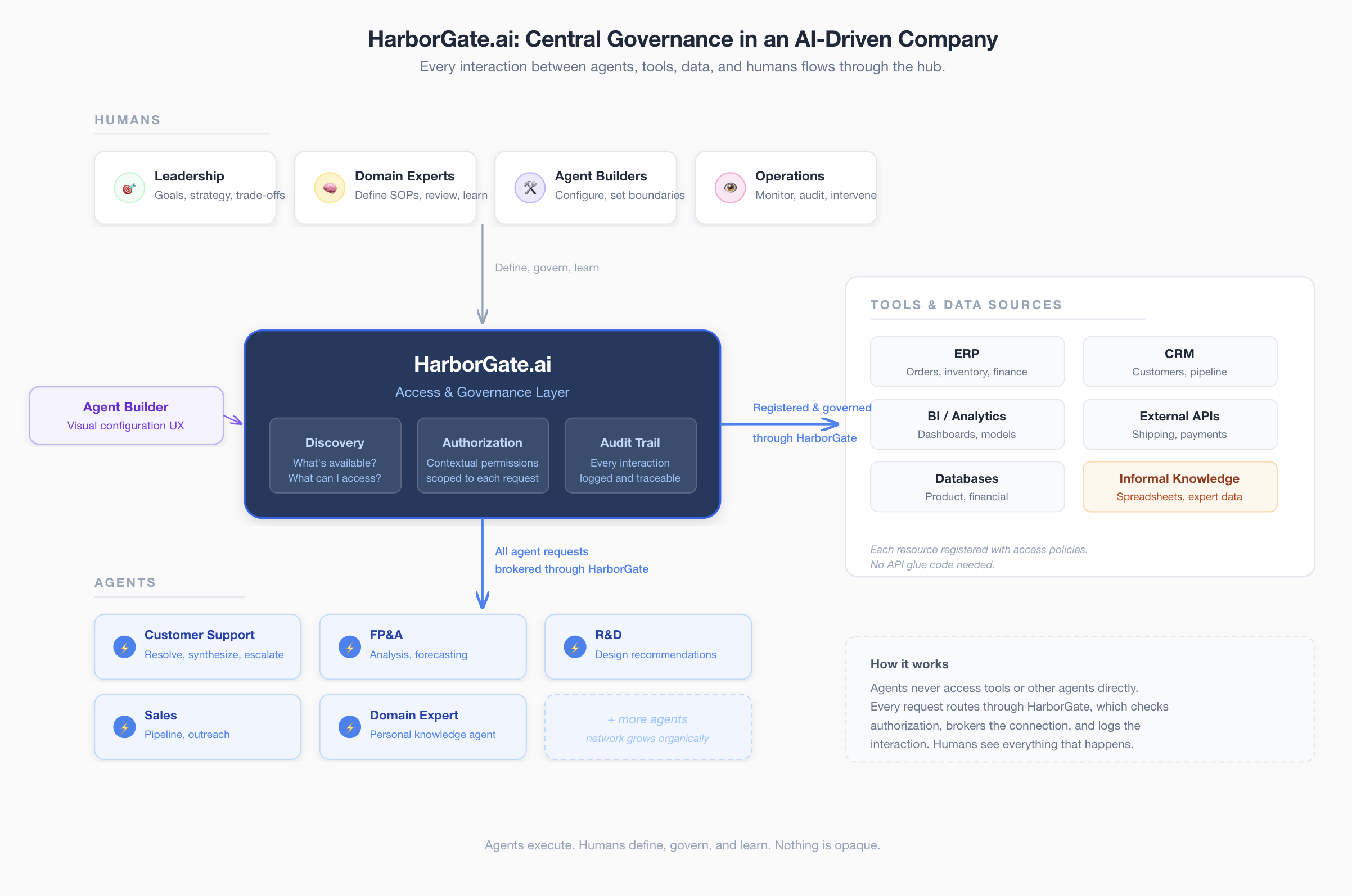

Infrastructure that connects systems, agents, and human expertise, with authorization, discovery, and auditability built in.

Note: This is a brief for a hypothetical company with the working name HarborGate.

Designed to keep humans in control today, and to let anyone run a complex company tomorrow.

Where This Is Going

AI agents will change how companies operate. We think the most interesting outcome isn't automation. It's enabling small teams to do what previously required hundreds of people, while keeping human judgment at the center.

This happens in two phases. First, we build the governance and connectivity layer that enterprises need to deploy agent networks responsibly. Second, we package the organizational patterns we learn into reusable templates, so that a small team can stand up the operational backbone of a complex company without building it from scratch.

We're not aiming for fewer companies with fewer people. We want more companies, more brands, more creative output. A new wave of entrepreneurship where agent networks handle operations and humans supply meaning, direction, and taste.

Problem

Companies are deploying AI agents across their operations. At the same time, they already have dozens of integrated systems, APIs, and databases, plus a vast amount of knowledge that lives informally in spreadsheets, personal models, and people's heads.

The moment any of these need to work together across domain boundaries, everything breaks down. A customer agent pulling order data from the ERP, a finance agent accessing a BI analyst's market model, a support agent checking repair status: each of these cross-boundary interactions becomes custom glue code with bespoke security, zero visibility, and no audit trail.

This problem grows exponentially as organizations move from isolated agents to networked collaboration. The bottleneck isn't the agents. It's the absence of shared infrastructure.

Principles

These are not aspirations. They are design constraints baked into the architecture.

Human learning stays in the loop. Agents execute workflows. Humans define, evaluate, and improve them. When agents learn from experience, humans understand what was learned and can approve, reject, or modify it. The moment agent learning becomes opaque, we've failed.

Auditability over efficiency. There will always be pressure to let agents act faster by reducing oversight. We reject the framing that oversight is friction. Transparency is a feature, not a cost.

Authorization reflects accountability. If a human is responsible for a decision, they control the agent making it. The agent network mirrors the structure of human accountability, not the other way around.

Reversibility by default. Agents prefer actions that can be undone. When an action can't be undone, a human gets pulled in.

No silent automation. When agent capabilities expand, when they start learning from experience or take on tasks that previously required human judgment, that transition is explicit. Users opt in with full understanding. Nothing gets automated quietly because it improves metrics.

In regulated industries, these principles are requirements. As agents grow more capable, they're what prevent the drift from collaboration into replacement.

Phase 1: Governed Agent Networks for Enterprises

HarborGate

HarborGate is a central access and governance layer that sits between all resources in an enterprise knowledge network. It brokers two types of resources:

Tools and data sources. APIs, databases, ERP endpoints, system integrations, and any structured data. These are predictable and don't need intelligence wrapped around them. They just need governed access. HarborGate registers them, manages who can call them, and logs every interaction. This includes human-contributed data: when a BI analyst wants to make their market model available to the network, they register it as a data source with access policies they define. No API needs to be built. The hub handles governance.

Agents. AI agents that add reasoning, interpretation, and judgment. A customer support agent that synthesizes information from multiple sources to resolve a complaint. An R&D agent that translates failure data into design recommendations. A domain expert's personal agent that can answer unstructured questions based on knowledge they've embedded in it. Agents belong where interactions require intelligence, not where system-to-system integration already works.

For both resource types, HarborGate provides:

Discovery and registration. Any participant in the network can ask: "What's available, and what do I need to access it?" The hub is a living catalog of the organization's tools and agents, covering not just endpoints but capabilities, data contracts, and access requirements.

Authorization and access control. Contextual, data-driven permissions. A customer-facing agent gets scoped access to order data for the customer it's serving. A finance agent with broader privileges authenticates once for read access across ERP tools. Permissions reflect the actual organizational knowledge graph, not just static roles.

Tracing and auditability. Every interaction flows through the hub: agent-to-tool, agent-to-agent, tool-to-tool. Complete audit trails answer: "Why did this agent access this data? What was the reasoning chain? Who authorized it?"

Agent Builder

The hub is plumbing. To make agent networks accessible beyond engineering teams, we also build an agent builder designed for domain experts. Teams should be able to configure agents that reflect their workflows, connect them to the hub, and iterate without writing glue code. The builder is where humans define what agents do and set their boundaries. It's also the foundation for phase two: if only developers can build agent networks, the democratization vision doesn't work.

Three Scenarios

Customer Support Agent resolves a complaint.

The agent queries the hub

for the customer's order (ERP tool, direct data call), checks delivery status (shipping

carrier API, direct data call), and asks the Repair Agent whether similar failures have been

reported (agent-to-agent, reasoning required). Three interactions, two resource types, one

audit trail.

FP&A Agent builds a margin analysis.

The agent pulls revenue data from

the ERP (tool), cost data from procurement (tool), and requests the BI team's latest market

segmentation (a dataset the analyst registered in the hub with finance-team read access). All

governed, all traced.

A new team connects to the network.

Day one: they register their

existing APIs and data sources in the hub. Instant governed access for authorized consumers. A

team member registers a curated spreadsheet as a data source. Week two: they deploy a domain

agent using the builder that can reason over their data and answer unstructured questions. The

network grows organically.

Bootstrapping

HarborGate doesn't require a company to deploy agents before it's useful:

- Start with what exists. Register current APIs, databases, and integrations. Team members register curated datasets. Add governance and tracing to cross-system data flows that already happen.

- Teams add agents. As teams build domain agents through the builder, they plug into the hub. Their agents can immediately discover and access existing resources.

- The network grows. Each new resource makes the hub more valuable for everyone already connected.

This mirrors how companies naturally adopt agents: individual agents embedded in teams first, then networked collaboration, with the hub as enabling infrastructure throughout.

Pattern Collection

Phase one is also research. Working with enterprises, we learn what a well-functioning customer support network looks like, what an FP&A workflow requires, what authorization structures recur across industries. We learn which parts of an organization's operations are structurally similar across companies and which are unique.

This is the foundation for phase two. The organizational patterns we discover become the templates we offer.

Phase 2: Agent Networks as a Service

As LLMs improve, agents become more capable. The organizational patterns we've collected in phase one become reusable. Phase two packages them into deployable templates, so that a small team can stand up the operational backbone of a complex company without building it from scratch.

A founder with a product idea and a team of three selects from a library of proven agent network templates: customer support, finance and accounting, supply chain coordination, sales operations. Each template comes pre-configured with agent roles, authorization structures, and workflows drawn from patterns that actually work in real organizations. The team customizes, connects their specific tools and data, and launches.

The founder and their team focus on the brand, the creative direction, the product vision, the customer relationships, the strategic judgment. The agent network handles the operational complexity that would previously have required hiring dozens of people before you could even test whether the idea works.

Stakes

This is where the ethical questions sharpen. If phase two only makes existing large companies more efficient, it accelerates the squeeze on the job market: fewer people doing more, gains flowing to shareholders. But if it lowers the barrier to starting and running a company, it creates new economic actors, new brands, new competition, new jobs in places that don't exist yet.

The second outcome is possible but not inevitable. It requires deliberately building for the small team, not just optimizing for enterprise efficiency. That means accessible UX, affordable pricing, templates that work without a technical co-founder, and an architecture that doesn't assume enterprise-grade infrastructure.

Humans After Automation

As agents take on more execution, the human role moves from doing and correcting to defining and governing. Humans set goals, judge quality, make trade-offs, and decide what the company stands for. This may be more meaningful than what most people do in large organizations today.

But we're honest about the tension. Not every current job maps onto this new role. The transition will be disruptive. Our commitment is to build tools that create more opportunities than they eliminate, and to measure ourselves against that standard.

Timing

Fragmentation creates an opening. Every major vendor builds agents within their own walled garden. No single player is incentivized to solve cross-platform collaboration, because lock-in benefits them. This is the classic setup for a neutral infrastructure layer.

Companies are hitting the wall. Organizations that deployed individual agents are now asking how to connect them. Authorization across domain boundaries is the first problem they encounter.

Informal knowledge has no home. Analyst models, expert datasets, and curated spreadsheets are everywhere in organizations but have never been discoverable or access-controlled. The hub lets people register this data alongside formal systems with the same governance and tracing.

Compliance requires it. GDPR, SOC 2, and industry regulations require auditable data flows. An ungoverned mesh of agents and tools is a compliance risk. A central hub with full tracing solves this directly.

Neutrality is available. A Salesforce agent broker won't be trusted by SAP shops, and vice versa. The same dynamic that made Stripe the payments layer and Twilio the communications layer applies here. HarborGate becomes more valuable with every connected resource, and network effects compound.

Defensibility

The governance layer itself is conceptually simple. Authorization, tracing, discovery: these are well-understood patterns. Any competent infrastructure team could build a comparable hub, and the big platform players will eventually build their own versions.

What's harder to replicate:

Organizational patterns. Every enterprise deployment teaches us how a real company operates, not the org chart on paper, but the actual flows of information, decision-making, and authorization. Which agent configurations work for customer support in manufacturing versus retail. What authorization patterns break down under pressure. Where humans need to stay in the loop versus where they don't. A competitor can copy our architecture. They can't copy what we've learned from deploying it across dozens of industries. When this becomes the template library in phase two, it's the difference between a blank canvas and a proven operational blueprint.

Network effects within the hub. Every registered resource, whether API, dataset, or agent, makes the hub more valuable for everyone else connected. Once a company's agents, tools, and informal knowledge are catalogued in HarborGate, switching costs are high. Stripe's moat isn't payments technology. It's that ripping it out is painful once it's embedded.

Cross-company network effects. Longer-term, if companies in the same supply chain or industry connect through HarborGate, we get inter-organizational agent communication with shared governance. A supplier's agent talking to a manufacturer's agent through a trusted neutral layer. At that point we're not infrastructure for one company. We're infrastructure for an ecosystem. That's extremely hard to displace.

Trust. If we're the company known for keeping humans in control, with auditability, reversibility, and explicit consent for automation, that becomes a reputation in regulated industries. Banks, healthcare, government, defense: these sectors will pay a premium for infrastructure they trust. A competitor can match features. They can't instantly match a track record.

Open Questions

Technical

- What protocol should the hub use? Can we build on emerging standards like MCP and add the governance layer on top?

- Where is the boundary between the hub and existing infrastructure (API gateways, IAM, observability)?

- How do we handle agent identity and trust, especially when agents can spawn sub-agents or be manipulated through their inputs?

- What are the right initial connectors and integrations to make the hub immediately useful?

Product

- What does the agent builder need to look like for non-technical domain experts to use it effectively?

- How do we capture organizational patterns in phase one in a way that generalizes for phase two templates?

- What is the minimum viable agent network template that's useful to a small team?

Ethical

- As agents learn from experience, how do we maintain the principle that humans understand what was learned?

- How do we prevent the enterprise efficiency use case from crowding out the democratization use case?

- What metrics should we track to ensure we're creating more economic actors, not fewer?

- When does automating a domain cross the line from freeing humans to displacing them, and who decides?