20.02 2026 · 6 min read

Part 1: Agents in Context

The automation pattern is familiar. The domain is not.

Early Release: This is a draft version of the article and will continue to evolve.

Co-authored with AI: This article was written in collaboration with AI tools.

Automation has been part of human civilization for centuries. Starting with simple tools, leading to factories, and resulting in the current wave of artificial intelligence. CEOs announce layoffs, others are regret it, AI slop causes disdain, and genuinely impressive capabilities keep emerging. In that chaos, it may seem AI is completely different from what we've seen before.

I claim that AI follows the same automation pattern as every previous wave. Only the domain it automates is genuinely new, and that changes how organizations need to approach it.

A Familiar Pattern

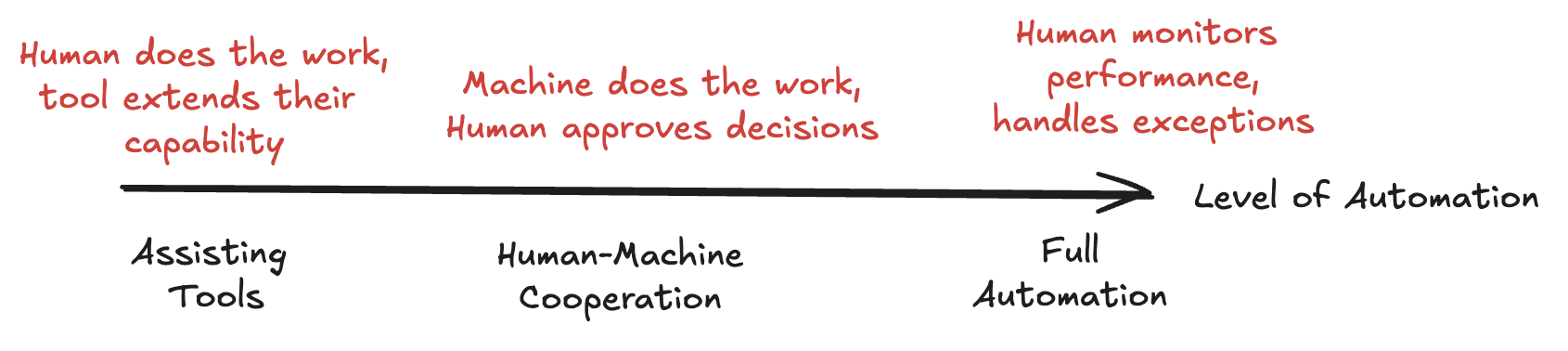

Centuries ago, the discovery of tools and machines has allowed us to extend our capability to make and shape our environment. Earliest humans discovered simple tools to assist their goals. Over time, we've found ways to cooperate with machines to scale output (mills, farming, factories, etc.). Nowadays, we have whole autonomous factories running, like oil refineries, or automated warehouses. In these cases, humans only monitor or take care of rare edge cases.

Every time we have automated something with machines, humans adjust into new roles; some cooperate with the machines, some shift into supervising the automation, and others move into entirely new kinds of work enabled by the new capabilities. The degree of automation for a task depends on many factors, but the pattern generally repeats throughout history (farming, industrialization, digitalization).

AI is not different here and fits the pattern. We use chatbots in the browser as tools to draft emails or fix files (like a tool). We cooperate with coding copilots and AI agents that handle tasks while we review their outputs and manage exceptions (human-machine cooperation to scale output). And we're starting to see self-driving cars, and agents that operate independently within defined boundaries (full automation). So, what's different about AI and are humans going to adjust into new roles as before?

What's new?

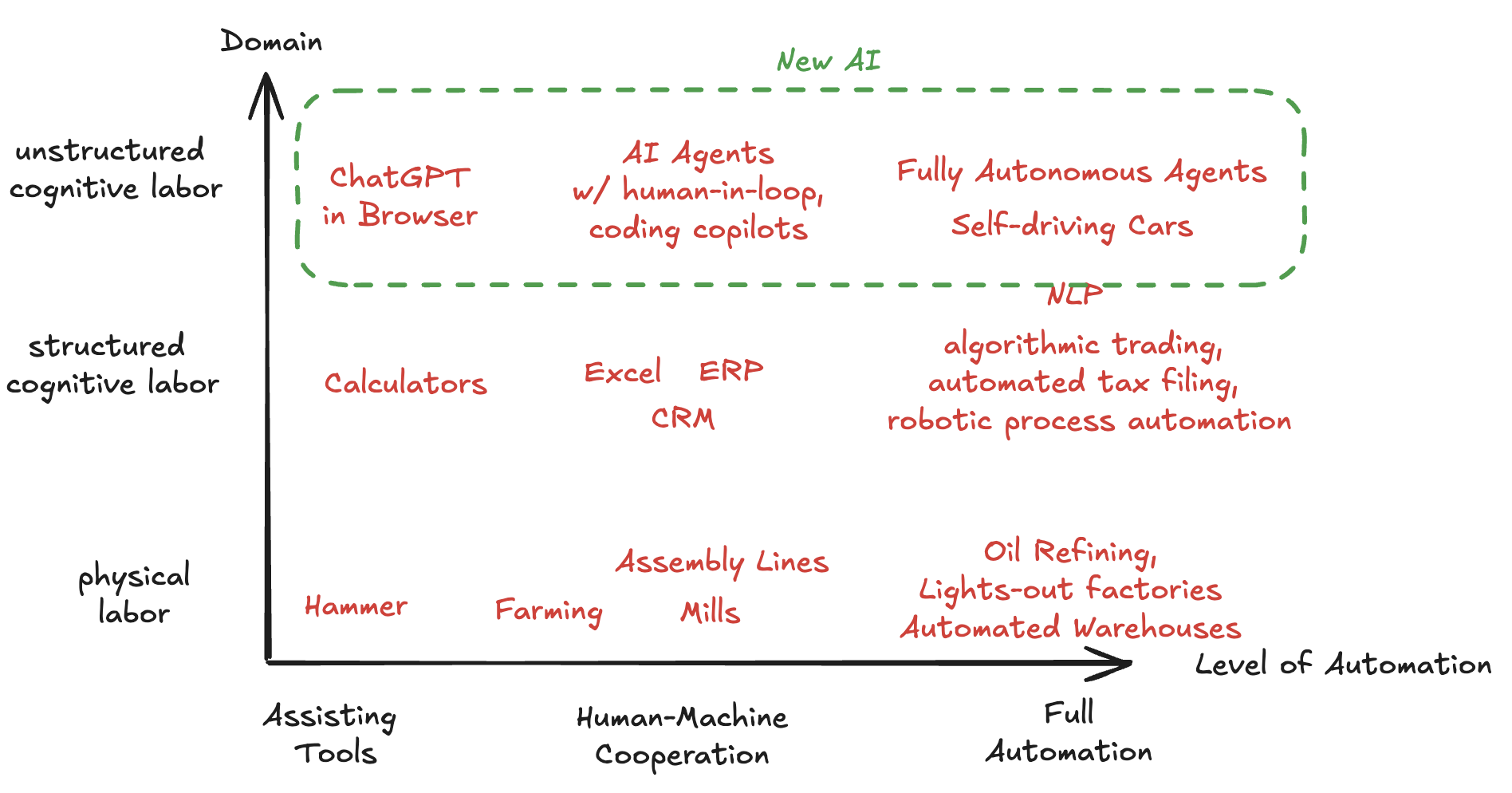

If AI falls into a pattern of automation, where does it differ? Previous waves of automation externalized physical labor (mills, engines, assembly lines) while others structured cognitive labor (calculators, databases, rule-based software). What's new with AI isn't that we're automating cognition (spreadsheets already do that) but that we are automating the kind of cognition that handles ambiguity, novelty, and unstructured environments.

This gives us two dimensions to think about: the level of automation, and the domain being automated. While AI is following a pattern in automation that we've seen many times before, the domain that is automated is completely new. As we'll see, that changes what the human role looks like.

Don't make the mistake of seeing these levels a necessary progression. Instead, each level of automation needs to match the requirements and risk profile. Some tasks don't fit full automation, and as we'll discuss next, AI agents have their limitations too.

Systems that (don't) Learn

There's a tempting assumption buried in most AI deployment plans: that agents, given enough data, will figure it out. They have access to documents, knowledge bases, retrieval systems. Surely that should be enough, right?

I suggest that this is not enough. There is a difference between relying on a text database and learning from experience. An AI agent can retrieve what's been written down, but after each interaction it resets. What the agent encountered doesn't change what it knows next time. It doesn't notice its own patterns. It doesn't revise its approach after a near miss. But it's exactly in these interactions, in the mistake it make, the edge cases, where the most useful knowledge lives.

For structured domains, this is fine. A warehouse robot doesn't need to reflect on its day. But unstructured cognition is defined by ambiguity and edge cases. As a company and its processes develop over time, humans have meetings where they firefight issues, reflect on mistakes, review SOPs, and re-negotiate responsibilities. You can try to emulate these patterns with agents, but they will not reach a human's ability to learn (yet).

As long as agents struggle with learning, they will keep producing undefined behavior in exactly the places that matter most. This is why, for unstructured cognition, Level 3 (full automation) is premature. Level 2, where humans actively cooperate with agents, catch what they miss, and feed what they learn back into the system, is where the system actually improves. Here the human is not a safety mechanism, but the learning mechanism for the agent.

Becoming Teachers

With AI agents operating in unstructured domains, the machine doesn't stay fixed. It needs to evolve continuously; its context refined, its boundaries adjusted, its knowledge updated. As long as agents struggle with learning from experience, the people working alongside these systems need to develop them in an ongoing sense.

This may be an extension of the historical pattern rather than a break from it. Human-machine collaboration has a long history: whether it's a miller using a wind-mill, or an accountant using software to create the end-of-year reporting. But when the domain being automated is judgment and ambiguity, that cooperation starts to look less like operation and more like teaching. The person working alongside the agent needs to understand not just the tool, but the knowledge the tool is trying to work with.

That's the crux of it. The pattern of automation is familiar, but the domain is new. And because the domain is messy unstructured cognition, the level of automation you choose isn't just an efficiency question. It's a question about how your organization learns. To get this right we require an understanding why knowledge is harder than it looks, which is what we'll explore in the next article.